Announcing respyra: An Open-Source Toolbox for Respiratory Tracking in Interoception Research

posted on February 27, 2026

We’re happy to announce the first public release of respyra, a Python toolbox for respiratory motor tracking experiments. The package is available now on PyPI and the accompanying preprint is up on PsyArXiv.

What is respyra?

Interoception research has long focused on how people perceive internal signals like heartbeats. But the control of bodily signals – particularly how we voluntarily regulate and adapt our respiratory patterns through respiratory motor tracking – has received far less attention. respyra was built to open up this space.

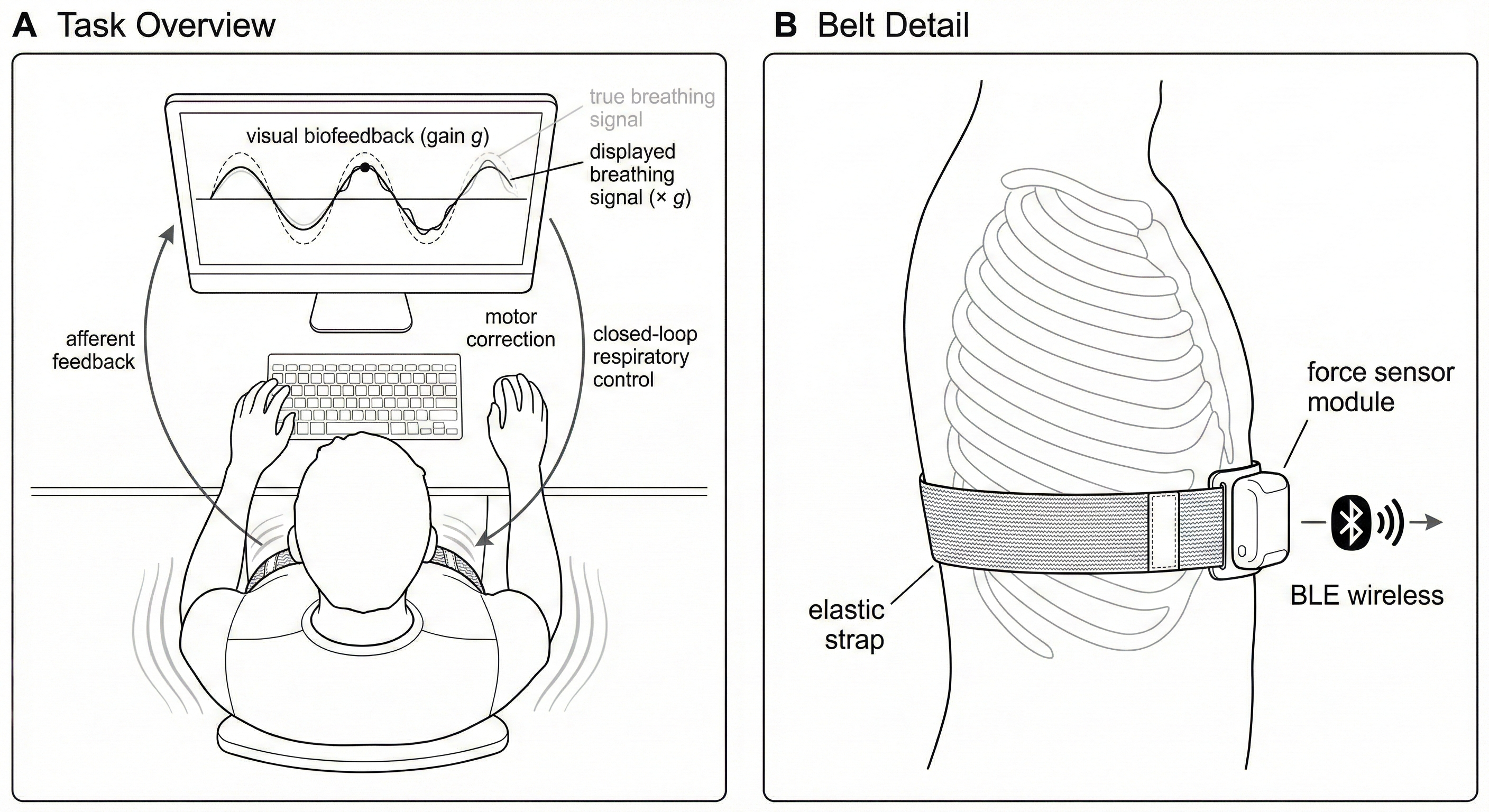

The toolbox integrates a Vernier Go Direct Respiration Belt with PsychoPy to create a closed-loop breathing paradigm. Participants wear a wireless chest-mounted force sensor and follow a sinusoidal target dot on screen with their breathing. The display provides continuous color-coded feedback, going from green when tracking is accurate through to red when it drifts.

What makes this more than a simple biofeedback tool is the visuomotor perturbation capability. Drawing on the reaching adaptation literature, respyra can amplify or attenuate the visual representation of the participant’s breathing without changing the target or the feedback. At a gain of 2.0, for example, every deviation from the target looks twice as large on screen, forcing the participant to halve their breathing amplitude to visually match the target. This creates a principled way to study respiratory motor recalibration.

What’s in the box

- Real-time display with a scrolling waveform, target dot, and participant trace

- Automated range calibration with percentile-based outlier rejection and sensor saturation warnings

- Configurable conditions built from composable frequency segments, with adjustable feedback gain

- Three feedback modes: graded continuous (green-yellow-red), binary, or trinary

- Non-blocking belt I/O via a background thread so PsychoPy’s frame loop stays smooth

- Crash-resilient logging that flushes every row to CSV, so data is never lost mid-session

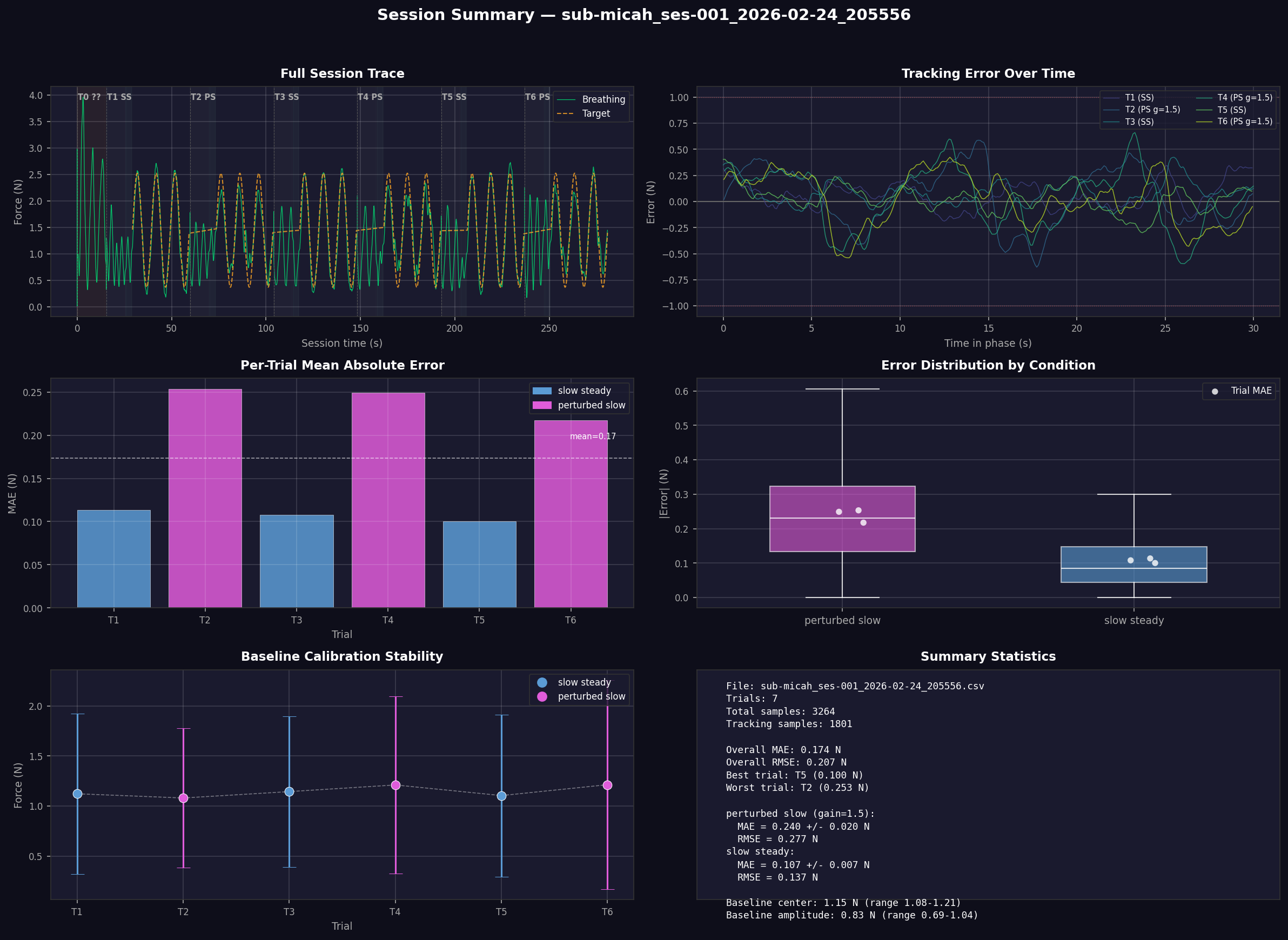

- Post-session visualization (

respyra-plot) producing a 6-panel summary figure for quick data review

Validation

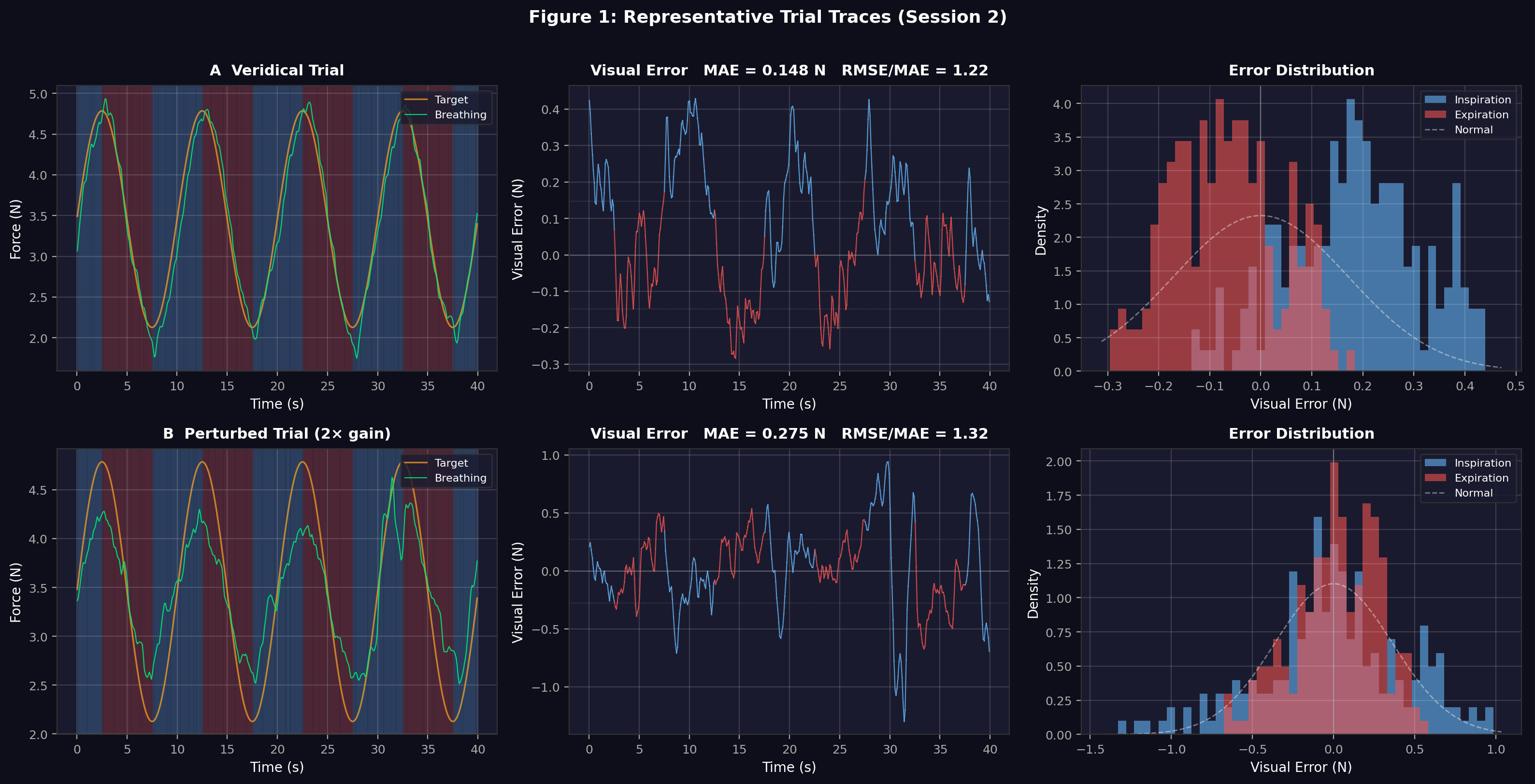

The preprint reports a single-participant validation study across 48 trials. Key findings:

- Under veridical feedback, mean tracking error was 0.24 N with an RMSE/MAE ratio matching the theoretical value for normally distributed errors, indicating stable, well-regulated tracking.

- Under 2x gain perturbation, error roughly doubled as expected, with characteristic signs of overcorrection visible in the error distributions.

- Within-block adaptation was observed in early sessions (participants learned to compensate for the perturbation within a few trials), with fatigue-driven degradation appearing in later sessions.

- Split-half reliability was strong at r = .86 (Spearman-Brown corrected), suggesting the task is suitable for individual-differences research.

Why release now?

We’re releasing respyra at an early alpha stage deliberately. We believe the best way to build a useful tool is to get it into researchers’ hands early, collect feedback on what works and what doesn’t, and develop it collaboratively.

The roadmap is ambitious. In the near term, we plan to expand the condition library with box breathing, oddball paradigms, ramp conditions, and adaptive difficulty scaling. We’re organizing a multi-site validation study with several labs participating to properly characterize the task and establish normative data across a range of participants.

Further down the road, we envision expanding the analysis toolkit with time-frequency analysis, phase-locking metrics, and group-level visualization. We’re also interested in gamified versions of the task for pediatric and clinical samples, where sustained attention to a sinusoidal dot may not be the most engaging paradigm.

Get involved

If your lab works on breathing, interoception, or respiratory psychophysiology, we’d welcome your involvement. We’re particularly looking for collaborators interested in:

- Piloting the task in your own lab and providing feedback on usability and data quality

- Participating in the multi-site validation study to establish reliability and norms across diverse samples

- Contributing new conditions or analysis methods to the toolbox

- Adapting the toolbox to work with other respiratory sensors

You can install respyra today with pip install respyra, explore the documentation, and run a no-hardware demo to see the display in action:

pip install respyra

python -m respyra.demos.demo_display

The code is on GitHub under an MIT license. Issues, pull requests, and feature suggestions are all welcome.

To get in touch about collaboration, contact micah@cfin.au.dk.

Citation

If you use respyra in your research, please cite the preprint:

Allen, M. (2026). respyra: A General-Purpose Respiratory Tracking Toolbox for Interoception Research. PsyArXiv. https://osf.io/preprints/psyarxiv/wjuce_v1